Last Updated on April 6, 2026

AI search is changing how people find information.

Instead of typing keywords intoGoogle, users now ask ChatGPT, Gemini and Perplexity direct questions.

And they get answers instantly.

No blue links.

No scrolling.

No ten results per page.

That shift is forcing SEO to change and creating a new view for experts to navigate.

Today, search engines don’t just rank pages.

They generate answers.

And behind those answers are large language models (LLMs) deciding which websites to trust, quote, and cite.

This need for AI-friendly optimization launches a new discipline: LLM SEO.

LLM SEO is optimizing your website so AI systems can discover your content, understand it, and choose it as a source in AI-generated answers.

If your site isn’t visible to LLMs, you lose traffic before rankings matter.

In this guide, you’ll learn:

- What LLM SEO is and how it works

- How AI search engines find and rank websites

- What ChatGPT, Gemini, and Perplexity look for in sources

- How to optimize your content for AI search results

- How to track traffic from LLMs

By the end, you’ll know exactly how to prepare your website for the next phase of search.

Read More On: Best AI Video Generator Tools in 2026 (Top 7 Compared)

What is LLM SEO?

LLM SEO stands for Large Language Model Search Engine Optimization.

It is the process of optimizing your website so AI systems like ChatGPT, Google Gemini, Perplexity, and Google SGE can:

- Discover your content

- Understand it correctly

- Trust it as a reliable source

- Cite it in AI-generated answers

In traditional SEO, the goal is to rank pages on Google.

In LLM SEO, the goal is different:

Your goal is to become the source the AI chooses to quote.

Instead of competing for clicks, you are competing for citations.

Instead of ranking links, you optimize answers.

A Simple Definition of LLM SEO

Here is the simplest way to define it:

LLM SEO optimises content so large language models can retrieve, understand, and use it as a source in AI search results.

This includes:

- Formatting content so machines can parse it easily

- Using structured data and schema

- Building topical authority and brand signals

- Publishing clear, factual, and well-organized answers

If an AI system can’t understand your page, it will not use it.

What Does “LLM” Mean in SEO?

LLM stands for Large Language Model.

These are AI systems trained to read, understand, and generate human-like text.

Examples include:

- ChatGPT (OpenAI)

- Google Gemini

- Claude (Anthropic)

- Perplexity AI

When these systems answer a question, they don’t browse the web like humans.

They retrieve documents from search indexes, score them, retrieve pertinent passages, and subsequently generate an answer using those sources.

That retrieval and selection process is what LLM SEO focuses on.

Read More On: Best AI Video Generator Tools in 2026 (Top 7 Compared)

What LLM SEO Optimizes For

Traditional SEO optimizes for:

- Rankings

- Click-through rates

- Traffic

LLM SEO optimizes for:

- Source selection

- Citations and attributions

- Entity recognition

- Answer relevance

- Trust and authority signals

In many AI search results, users never click a link.

They read the answer and move on.

That means the most valuable position in AI search is not “rank #1”.

It’s:

Being the website the AI trusts enough to cite.

How LLM SEO Is Different From Traditional SEO

LLM SEO and traditional SEO are related.

But they are not the same thing.

Traditional SEO was built for search engines that rank links.

LLM SEO is built for systems that generate answers.

That difference changes everything.

Ranking Pages vs Selecting Sources

In traditional SEO, the goal is simple:

Rank your page as high as possible in Google.

Users scan the results.

They choose a link.

They click.

In LLM SEO, there is no list of links.

The AI selects a small number of sources, extracts information, and generates a single answer.

If your page is not selected, it is invisible.

You don’t compete for position #1.

You compete to be one of the few sources the AI trusts.

Keywords vs Entities

Traditional SEO is keyword-driven.

You optimize for:

- Exact keywords

- Search volume

- Keyword difficulty

LLM SEO is entity-driven.

AI systems care more about:

- Topics and concepts

- Brands and authors

- Relationships between entities

Instead of asking, “Does this page contain the keyword?”

LLMs ask:

“Is this website a trusted source on this topic?”

That’s why topical authority and entity SEO matter much more in AI search.

Backlinks vs Brand Mentions

Backlinks are still important.

But in LLM SEO, brand mentions and citations weigh just as much.

AI systems learn authority from:

- Mentions across the web

- Publisher references

- Knowledge graphs

- Author profiles

A site widely mentioned, even without links, often performs better in AI answers than a site with only backlinks.

Trust is no longer only a link metric.

It’s a reputation signal.

Clicks vs Citations

Traditional SEO measures success with:

- Rankings

- Traffic

- Click-through rate

LLM SEO measures success differently.

The key metrics are:

- How often your site is cited

- How often does your brand appear in AI answers

- Whether your content is used as a source

In many cases, users never visit your website.

But your brand still influences them through the AI answer.

Visibility now comes before the click.

Page Optimization vs Answer Optimization

Traditional SEO focuses on:

- Title tags

- Meta descriptions

- Keyword placement

LLM SEO focuses on:

- Clear definitions

- Structured formatting

- Direct answers

- Lists, tables, and summaries

AI systems prefer content that:

- Answers questions quickly

- Uses simple language

- Is logically organized

- Contains factual, verifiable information

The easier your content is to extract, the more likely it is to be used.

A Quick Comparison

Traditional SEO:

- Ranks pages

- Optimizes keywords

- Competes for clicks

- Focuses on backlinks

- Measures traffic

LLM SEO:

- Selects sources

- Optimizes entities

- Competes for citations

- Focuses on trust and cites

- Measures visibility

LLM SEO does not replace traditional SEO.

It builds on it.

The best strategy in 2026 is not choosing one or the other.

It’s combining both.

How AI Search Engines Work

AI search engines do not work like Google’s traditional crawler.

They don’t simply scan pages and rank links.

Instead, they combine three systems:

- Training data

- Live search retrieval

- Answer generation

Understanding this process is the foundation of LLM SEO.

Step 1: Training Data (How LLMs Learn Language)

Large language models are trained on huge datasets.

These include:

- Public websites

- Common Crawl archives

- Books and documentation

- Licensed publisher content

- Forums and technical resources

This training teaches the model:

- Language patterns

- Facts and concepts

- How topics relate to each other

But training data has a major limitation.

It is often months or years old.

That means LLMs cannot rely solely on training data for new information.

Step 2: Live Search Retrieval (How AI Gets Fresh Data)

Modern AI systems use live retrieval.

When a user asks a question, the system queries:

- Search engine indexes (Bing, Google SGE)

- Proprietary crawling pipelines

- Publisher APIs and partnerships

This step is called retrieval.

The system pulls a set of relevant documents from the web.

These documents become the candidate sources for the answer.

If your website is not discoverable in these indexes, it will never be considered.

This is where classic SEO matters.

Step 3: Ranking and Passage Selection

After retrieval, the AI does not use every document.

It scores each one based on:

- Relevance to the question

- Authority of the source

- Freshness

- Content quality

Only a small number of pages survive this stage.

From those pages, the system extracts specific passages.

These passages become the raw material for the final answer.

Step 4: Answer Generation

In the final step, the LLM generates an answer.

It combines:

- The user’s question

- Retrieved passages

- It’s trained knowledge

The result is a natural-language response.

Sometimes the system shows citations.

Sometimes it does not.

But in every case, only a few sources influence the output.

Why This Matters for SEO

This pipeline explains why LLM SEO is different.

To appear in AI answers, your site must:

- Be discoverable in search indexes

- Be selected during retrieval

- Score highly during ranking

- Contain extractable passages

If you fail at any step, your content will be deleted.

You do not lose position.

You lose visibility.

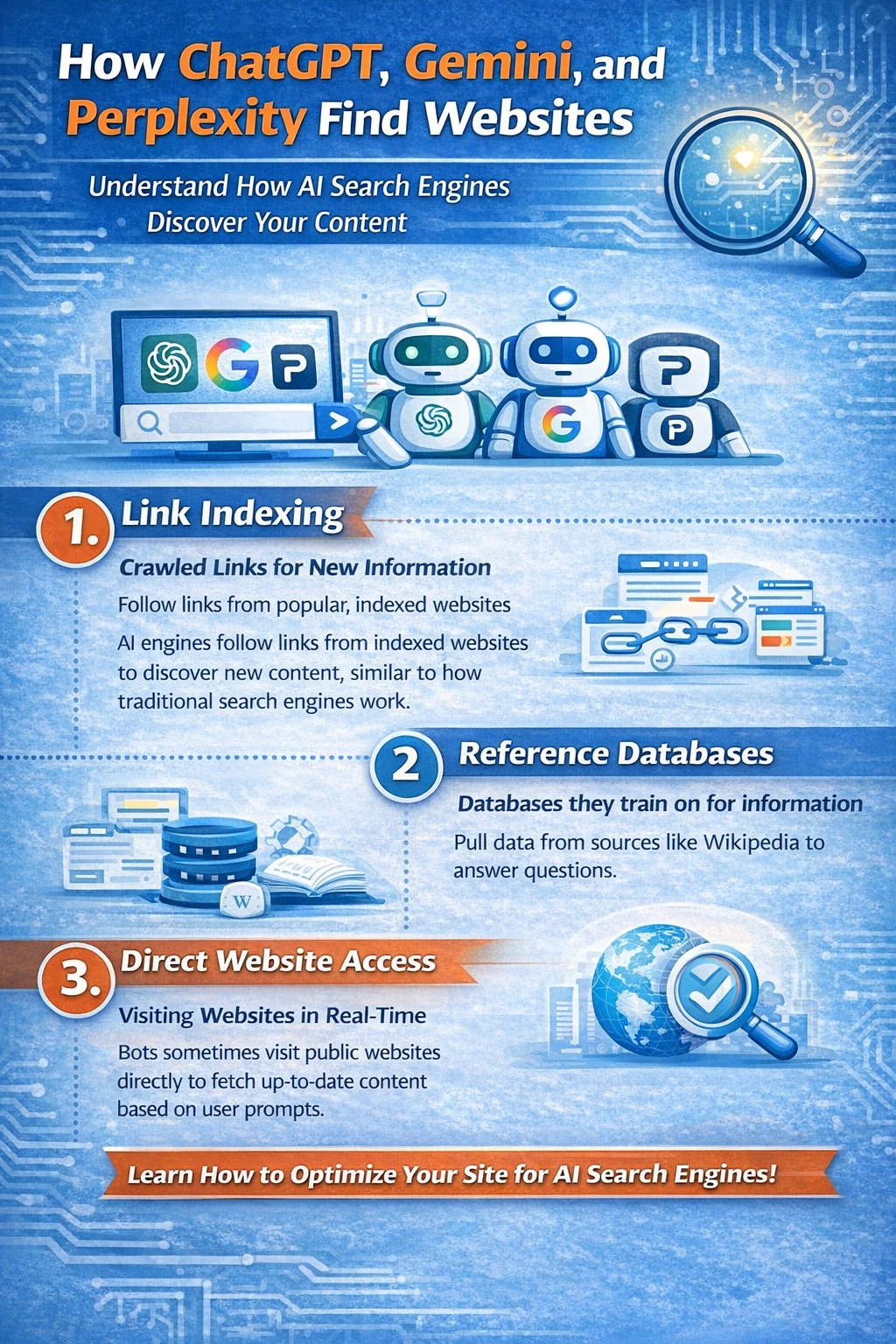

How ChatGPT, Gemini, and Perplexity Find Websites

AI search systems do not browse the internet like humans.

They rely on a layered discovery system built on search indexes, crawlers, and retrieval pipelines.

Understanding this system is essential for LLM SEO.

The Three Main Ways LLMs Discover Content

Modern AI search engines use three primary sources:

- Traditional search engine indexes

- AI-specific crawlers

- Licensed and partnered data sources

Each plays a different role.

1. Search Engine Indexes (The Primary Source)

Most AI systems do not maintain their own full web index.

Instead, they rely on existing search engines.

In practice:

- ChatGPT (with browsing) uses the Bing index

- Copilot is fully powered by Bing

- Perplexity uses Bing, its own crawler, and partner indexes

- Google Gemini / SGE uses Google’s main index

This means something important:

If your site is not indexed by Google or Bing, it will not appear in AI search results.

Classic SEO is still the foundation.

If your pages are blocked, poorly crawled, or not indexed, LLM SEO cannot work.

2. AI Crawlers and Retrieval Bots

In addition to search indexes, many AI platforms run their own crawlers.

Common examples include:

- GPTBot (OpenAI)

- PerplexityBot

- ClaudeBot

- AppleBot

These crawlers collect:

- Fresh content

- Structured data

- High-authority sources

- Frequently updated pages

Unlike Googlebot, these crawlers are selective.

They focus on:

- Trusted domains

- Technical documentation

- News and research sites

- Well-structured content

Here, machine readability becomes critical.

Pages that are:

- Slow

- Poorly structured

- Filled with ads or scripts

Are often skipped or ignored.

3. Licensed Publishers and Data Partnerships

Large AI platforms also rely on licensed data.

This includes:

- News publishers

- Educational databases

- Documentation providers

- Proprietary knowledge sources

These sources are heavily weighted.

They provide:

- High-trust signals

- Fresh information

- Confirmed facts

This is one reason why authoritative sites are cited far more often in AI answers.

How Retrieval Actually Works (Behind the Scenes)

When a user asks a question, the system does not search the entire web.

Instead, it runs a retrieval query against a limited index.

This process usually follows this order:

- Interpret the intent of the question

- Generate multiple retrieval queries

- Pull the top candidate documents

- Filter by authority and freshness

- Rank passages, not just pages

Only a small number of documents survive this process.

In many systems, fewer than 20 pages influence the final answer.

And often fewer than 5 sources are actually used.

What Determines Whether Your Site Is Considered

At the discovery stage, LLMs evaluate:

- Index coverage (Google / Bing visibility)

- Crawl accessibility (robots, performance, rendering)

- Domain trust and history

- Topical relevance

- Freshness signals

This explains a key rule of LLM SEO:

If your site is not already trusted by search engines, LLMs will never see it.

LLM SEO does not replace technical SEO.

It amplifies it.

Freshness vs Training Data

One of the biggest misconceptions about LLMs is that they only use old training data.

In reality:

- Training data provides general knowledge

- Live retrieval provides current information

For most factual and SEO-related queries, modern AI systems:

- Prefer retrieved documents

- Heavily weighted recent content

- Downgrade outdated sources

This means:

- Regular updates matter

- Timestamps matter

- Evergreen pages must be refreshed

Fresh authority beats old authority.

Why This Changes SEO Strategy

In traditional SEO, ranking on page one is enough.

In LLM SEO, ranking is only the first filter.

After ranking, your page must also:

- Be retrieved

- Be trusted

- Contain extractable passages

- Outperform competing sources

This is why many top-ranking pages do not appear in AI answers.

And why some lower-ranking pages are cited often.

The selection criteria are different.

What LLMs Look For When Choosing Sources

When an AI system generates an answer, it does not randomly select sources.

Every citation is the result of a ranking process designed to identify the most reliable and useful information for a specific question.

Understanding this process is the core of LLM SEO.

At a high level, LLMs evaluate three things: relevance, trust, and usability.

Relevance determines whether your content corresponds to the question’s intent.

Trust determines whether your website is considered authoritative enough to cite.

Usability determines whether the system can easily extract and reuse your content.

All three must be present.

Topical Relevance and Query Matching

The first filter is relevance.

When a user asks a question, the retrieval system searches for documents that directly address that topic.

This process is not based on exact keywords.

It is based on semantic similarity.

The system analyzes the query’s meaning, expands it into related concepts, and then retrieves documents that cover those concepts in depth.

Pages that clearly define a topic, explain related subtopics, and use consistent terminology are favored.

Shallow pages that only briefly cover the topic are usually filtered out early.

This is why topical depth matters more than keyword density in LLM SEO.

The goal is not to match a phrase.

The goal is to demonstrate that your page is about the subject.

Authority and Trust Signals

Once relevance is established, trust becomes the dominant factor.

LLMs strongly prefer sources that appear authoritative across the web.

Authority is inferred from many signals.

Search engines look at backlink profiles, historical performance, and domain stability.

AI systems add additional layers.

They evaluate how often your brand or domain is mentioned in reputable publications.

They analyze whether your content is cited by other authoritative sources.

They examine whether your site is associated with recognized entities such as companies, authors, or institutions.

In many cases, a site with moderate rankings but strong brand recognition will be selected over a higher-ranking page from an unknown domain.

Trust is not a single metric.

It is an accumulated reputation.

Entity Recognition and Knowledge Graph Alignment

Modern LLMs do not treat websites as isolated pages.

They treat them as entities.

An entity can be a brand, a company, an author, a product, or a concept.

When your site is consistently associated with a specific topic, the system learns that relationship.

This happens by structured data, author profiles, Wikipedia entries, business listings, and repeated mentions across the web.

Once an entity is established, the model begins to favor it when questions related to that topic appear.

This is one of the reasons why established brands dominate AI citations.

They are not just ranking pages.

They are recognized entities.

Content Clarity & Extractability

Even if your page is relevant and trusted, it can still be rejected.

The final test is usability.

LLMs do not read content the way humans do.

They look for passages that can be safely extracted and reused.

Clear definitions, concise explanations, and logically structured sections perform best.

Long paragraphs with filler language, marketing copy, or ambiguous phrasing perform poorly.

AI systems prefer content that states facts directly.

They favor pages that answer questions in the first several sentences of a section.

They favor writing that avoids metaphors, hype, and unnecessary complexity.

The easier your content is to parse, the more likely it is to be cited.

Freshness and Temporal Signals

For many queries, freshness plays a decisive role.

AI systems downgrade sources that look outdated.

They look at publication dates, update history, internal links, and external references.

If two pages are equally authoritative, the more recent one usually wins.

This is especially true for topics related to technology, SEO, and AI.

Regular updates signal your site is actively maintained.

That alone can increase citation frequency.

Why Some Sites Are Chosen Repeatedly

Over time, LLMs develop preferences.

When a source consistently produces accurate, well-structured answers, the system learns to trust it.

Those sites keep appearing across related queries.

This creates a feedback loop.

More citations lead to tougher entity signals.

More robust entity signals lead to more citations.

This is how authority compounds in AI search.

LLM SEO Ranking Factors

LLM SEO does not rely on a single ranking signal.

It is the result of multiple systems working together to decide whether a page is trustworthy enough to influence an AI-generated answer.

Some of these signals come from traditional SEO.

Others are specific to retrieval and language models.

Together, they form the ranking framework behind AI search.

Topical Authority

Topical authority is the strongest signal in LLM SEO.

AI systems strongly prefer websites that demonstrate sustained expertise in a narrow subject area.

This isn’t measured by a single page.

It is measured across your entire domain.

When your site publishes multiple high-quality articles around the same topic, the system learns that your brand is a reliable source for that subject.

This is why content clusters matter so much.

A standalone article about LLM SEO is rarely cited.

A website with twenty related articles becomes a default source.

Authority is built through coverage, consistency, and depth.

Domain Trust and Historical Signals

Domain-level trust still plays a major role.

LLMs inherit much of their trust framework from search engines.

They evaluate how long a domain has existed, how stable it is, and how it has performed historically.

Sites with a long publishing history, clean backlink profiles, and consistent indexing are favored.

Spam signals, thin content, and frequent ownership changes reduce trust.

This explains why new domains struggle to appear in AI answers, even when their content is strong.

Trust builds slowly.

But once established, it becomes a powerful advantage.

Brand Mentions and Reputation Signals

In LLM SEO, mentions often matter as much as links.

AI systems assess how often a brand or website is referenced across authoritative publications.

They look for repeated associations between your brand and specific topics.

They examine whether journalists, researchers, and industry blogs cite your work.

These mentions feed directly into entity recognition.

A brand that appears frequently in trusted contexts is more likely to be selected as a source, even if its page does not rank first in Google.

This is one of the main reasons why digital PR is becoming a core part of AI search optimization.

Content Structure and Machine Readability

Once authority is established, content quality becomes decisive.

LLMs prefer pages that are easy to interpret.

Explicit headings, logical section flow, and explicit definitions increase extractability.

Pages that explain a concept in a structured way regularly outperform pages that rely on storytelling or marketing language.

The first sentences of a section are especially important.

They are often used as summary candidates.

If your page does not clearly answer the question early, it is unlikely to be cited.

Formatting is not cosmetic in LLM SEO.

It is a ranking factor.

Structured Data and Semantic Markup

Structured data provides context that unformatted text cannot.

Schema markup helps AI systems understand what a page represents, who wrote it, what it is about, and how different entities are related.

Article schema, FAQ schema, Organization schema, and Person schema are especially influential.

While a schema alone does not guarantee citations, it significantly increases comprehension.

In competitive queries, structured pages regularly outperform unstructured ones.

Schema acts as a translation layer between your content and the machine.

Backlinks and Citation Graphs

Backlinks remain important.

But their role is changing.

In LLM SEO, links are not only authority signals.

They are also part of a citation graph.

AI systems examine which sources reference each other and how information flows across the web.

Pages that are frequently cited by authoritative sites are more likely to be retrieved and trusted.

In many cases, a smaller number of high-quality editorial links has more impact than a large volume of low-quality backlinks.

Quality outweighs quantity more than ever.

Freshness and Update Signals

Freshness plays a stronger role in AI search than in classic SEO.

LLMs heavily favor recent content for technical and fast-changing topics.

They evaluate publication dates, update frequency, internal revision signals, and external references.

A well-maintained page that is updated every few months often outperforms an older authoritative article that has not been touched in years.

Freshness is not about rewriting everything.

It is about signaling your information is still valid.

Engagement and Usage Signals

Some AI systems include indirect engagement data.

This includes how often a source is selected, how long users interact with answers that cite it, and whether follow-up questions cite the same source.

While these signals are not fully transparent, they create a feedback loop.

Sources that perform well continue to be selected.

Sources that generate poor answers gradually disappear.

Performance strengthens authority.

LLM SEO ranking is not controlled by one trick.

It is the combined result of authority, trust, structure, and clarity.

When all four align, citations become predictable.

How to Maximize Content for LLM SEO

Optimizing for LLM SEO is not about rewriting everything.

It is about changing how information is presented so AI systems can retrieve, understand, and reuse it easily.

The goal is simple: make your content the easiest and safest source for an AI to cite.

Write in an Answer-First Format

LLMs prefer content that answers questions immediately.

When a section begins, the first sentence must define the concept or directly address the question.

This resembles how retrieval systems extract passages.

Pages that delay the answer with introductions, anecdotes, or marketing language are often skipped.

The most cited pages usually place their definition or conclusion in the first two lines of a section.

This is not for readers.

It is for machines.

Use Relevant Headings That Match Search Intent

Headings act as anchors for retrieval.

When an AI scans a document, it looks at headings to understand what each section contains.

Headings that mirror real user questions perform best.

Instead of vague titles, precise phrasing works better.

A heading like “How LLMs Choose Sources” is far more effective than “Our Approach to AI Search.”

Matching natural language queries increases the probability that your section is retrieved for the right intent.

Structure Content for Extraction, Not Persuasion

Traditional SEO commonly prioritizes storytelling plus persuasion.

LLM SEO prioritizes extractability.

Well-performing pages are logically segmented, use brief paragraphs, and avoid complexity.

Each section should explain one idea completely before moving to the next.

Long, multi-topic paragraphs are difficult for retrieval systems to parse.

Clear topic boundaries make it easier for the model to isolate usable passages.

Use Definitions, Summaries, and Explicit Statements

LLMs are conservative.

They prefer sources that state facts clearly.

Definitions, explanations, and summaries are especially valuable because they can be reused safely.

Pages that hedge, speculate excessively, or rely on opinion language are cited less often.

The more direct your statements are, the more reusable they become.

This is why guides, documentation, and reference-style content dominate AI citations.

Reinforce Meaning With Internal Context

AI systems do not read a individual sentence in isolation.

They evaluate the surrounding context.

When you introduce a concept, reinforce it with consistent terminology across the page.

Avoid switching between multiple labels for the same idea.

Semantic consistency improves search effectiveness and reduces ambiguity.

This is especially important for new concepts like LLM SEO, where definitions are still improving.

Optimize for Entities, Not Just Keywords

LLM SEO is fundamentally entity-based.

When you mention important concepts, brands, tools, or people, connect them clearly to their roles.

Explain what they are and how they relate to the topic.

This strengthens entity recognition and helps the system build a knowledge graph around your content.

Pages that establish strong entity relationships are much more likely to be cited repeatedly.

Maintain High Factual Density

AI systems favor pages with a high information-to-word ratio.

Fluff, filler phrases, and marketing language reduce the likelihood of citations.

The most cited pages are dense with explanations, definitions, and useful insights.

This does not mean writing dry content.

It means every sentence must add meaning.

Design for Reuse Across Multiple Queries

Strong LLM SEO content is modular.

Individual sections can stand alone as answers to different questions.

When a page covers multiple related subtopics in clearly separated sections, it becomes eligible for many different retrieval queries.

This is one of the reasons pillar pages perform so well in AI search.

They act as a library of answers inside a single URL.

Update Content as Models and Systems Change

LLM SEO is highly sensitive to freshness.

As models improve, ranking criteria shift.

Pages that are reviewed and updated regularly keep visibility.

This does not require frequent rewriting.

Often, small changes such as adding a new section, refreshing examples, or updating dates are enough to signal relevance.

Stale pages gradually disappear from AI answers.

Maintained pages compound authority.

Optimizing for LLM SEO is not about gaming algorithms.

It is about publishing content that algorithms can trust.

When your pages are clear, authoritative, structured, and current, selection becomes predictable.

Schema Markup and Structured Data for AI Search

Structured data plays a key role in LLM SEO.

While large language models can read basic text, they rely heavily on structured signals to understand what a page represents, who created it, and how different concepts are related.

Schema markup acts as a translation layer between human language and machine interpretation.

It reduces ambiguity.

And in AI search, ambiguity is the enemy.

Why Structured Data Matters More in AI Search

Traditional search engines use schema mainly for rich results and enhanced snippets.

AI systems use it differently.

They use structured data to build entity graphs, validate facts, and assign meaning to content.

When an LLM retrieves a document, it does not only read the visible text.

It also reads metadata.

Author information, organization details, publication dates, and content types all influence how trustworthy and reusable a source appears.

Pages with clean, consistent, structured data are easier to classify.

This alone increases their likelihood of being selected.

Schema as an Entity Signal

LLM SEO is deeply entity-driven.

Structured data helps explicitly define those entities.

When you mark up your organization, your authors, your products, or your articles, you are telling machines exactly who you are and what you represent.

This matters because AI systems count on entity relationships to decide authority.

If your site is clearly associated with a recognized organization or expert author, it gains credibility.

If your pages lack identity signals, they remain anonymous.

Anonymous pages are rarely cited.

How Schema Improves Retrieval Success

Retrieval systems regularly filter documents before ranking them.

Structured data helps your page survive that filter.

The article schema clarifies that a page is an editorial resource.

FAQ schema highlights question-and-answer patterns.

Organization and Person schema connect content to actual entities.

These signals reduce misclassification.

They also help retrieval systems match your page to the correct query type.

A page that is clearly labeled as a guide, a definition, or a reference is more likely to be retrieved for informational questions.

Structured Data and Trust Validation

AI systems are conservative by design.

They avoid citing sources that appear ambiguous or unreliable.

Structured data provides verification signals.

Publication dates confirm freshness.

Author fields confirm accountability.

Organization markup confirms ownership.

In many systems, these fields are cross-checked against external knowledge graphs.

When they agree, trust increases.

When they conflict or are missing, the page may be downgraded or ignored.

This is one of the reasons why anonymous content performs poorly in AI search.

The Role of FAQ and HowTo Schema in LLM SEO

FAQ and HowTo schema have a special role in LLM SEO.

They explicitly map questions to answers.

This format mirrors how AI retrieval works internally.

Sections marked with the FAQ schema are often directly extracted into AI answers.

Not because the schema itself is ranked, but because it creates perfectly structured answer units.

Well-written FAQ sections frequently become citation candidates.

This is especially effective for technical and educational topics.

Structured Data Is Not a Shortcut

Schema does not override authority.

A weak site with perfect markup will not outperform a trusted site without it.

But when two authoritative pages compete, structured data often becomes the deciding factor.

It boosts comprehension.

It improves confidence.

And it improves extractability.

In competitive AI search queries, those small advantages add up.

The Long-Term Value of Structured Data

Schema also has a long-term benefit.

It feeds knowledge graphs.

Over time, your organization, authors, and topics become part of machine memory.

Once that happens, your site begins to appear more frequently across related queries.

This is how brands become default sources in AI search.

Not through one article.

Via consistent identity signals over the entire domain.

Structured data does not guarantee citations.

But without it, sustained visibility in AI search becomes much harder.

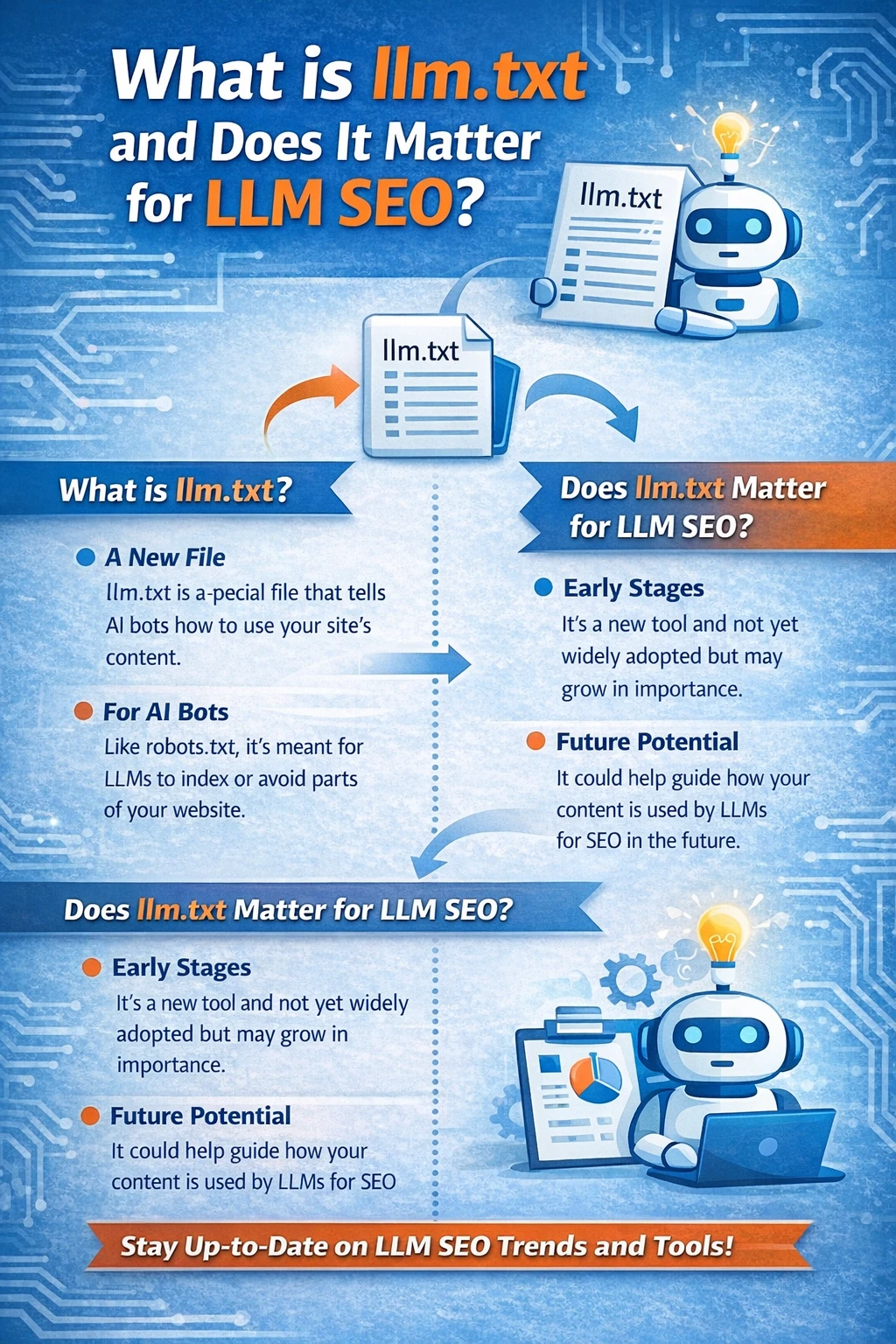

What is llm.txt and Does It Matter for LLM SEO?

As AI crawlers became common, a new file began appearing on websites.

It is called llm.txt.

And it is designed specifically for large language models.

What llm.txt Is

llm.txt is a proposed standard that allows website owners to control how AI systems access and use their content.

It works similarly to robots.txt.

But instead of managing search engine crawlers, it manages AI model crawlers and training pipelines.

With llm.txt, publishers can declare:

- Whether AI bots are allowed to crawl the site

- Which sections are allowed or disallowed

- Whether the content can be used for training

- Whether the content can be used for retrieval and answers

The file sits at the root of a domain.

When an AI crawler visits a site, it checks llm.txt before collecting content.

Why llm.txt Exists

The traditional robots.txt file was designed for search indexing.

It was never built to manage AI training or content reuse.

As large language models began scraping large parts of the web, publishers raised concerns about:

- Unauthorized training on licensed content

- Use of paywalled or proprietary data

- Lack of attribution and compensation

llm.txt appeared as a response.

Its goal is to give publishers a way to express consent and control.

Not ranking preferences.

Access permissions.

Does llm.txt Affect Rankings or Citations?

Right now, llm.txt does not directly improve rankings.

It is not a ranking factor.

And it does not guarantee visibility.

Most major AI platforms still rely primarily on search indexes instead of direct crawling.

That means blocking or allowing llm.txt usually doesn’t change whether your content appears in Google SGE, ChatGPT browsing, or Perplexity.

However, llm.txt can alter future data pipelines.

Some AI crawlers already respect it.

Others are beginning to experiment with it.

Over time, it may become the standard control layer for:

- Training data collection

- Retrieval pipelines

- Content reuse policies

That makes it strategically important, even if the short-term SEO impact is limited.

When llm.txt Matters for LLM SEO

llm.txt becomes relevant in three situations.

First, when you want to block AI training but still allow search indexing.

Second, when you want to allow retrieval and citation but restrict model training.

Third, when you operate a premium or proprietary content site, you need legal control.

For most informational websites, llm.txt is not needed for visibility.

But it is useful for governance.

It tells AI systems that your site understands the rules of AI access.

That alone can improve trust over time.

llm.txt vs robots.txt

robots.txt controls crawling for search engines.

llm.txt controls crawling and usage for AI systems.

robots.txt answers the question:

“Can you index this page?”

llm.txt answers a different question:

“Can you use this content to train systems or generate answers?”

They solve different problems.

They are complementary, not replacements.

The Strategic Role of llm.txt

While llm.txt is still improving, its long-term role is clear.

AI search will increasingly depend on explicit publisher permissions.

As regulation increases and licensing expands, platforms will prefer content that is:

- Clearly licensed

- Explicitly permitted

- Legally safe

In that future, llm.txt may become a trust signal.

Not for ranking.

But for eligibility.

Websites that block AI entirely may disappear from AI answers.

Websites that allow controlled access may become preferred sources.

Should You Add llm.txt Today?

For most SEO-driven sites, llm.txt is optional.

It will not increase traffic.

It will not improve rankings.

But it does future-proof your site.

It signals awareness.

And it gives you control.

For publishers, agencies, and brands working in AI-heavy industries, that alone is valuable.

llm.txt is not a magic SEO tool.

But it is an early building block of AI-era publishing.

Read More On: My Link Building Plan: How I Build Links That Google Trusts

How Brand Mentions and Entities Affect LLM SEO

LLM SEO is built around entities, not individual pages.

Modern AI systems do not treat every URL as an isolated document. They try to understand who is behind the content, what that source is known for, and whether it has a reputation in the real world. Once a website, brand, or author is recognized as an entity, every piece of content connected to it gains additional trust.

This is one of the biggest structural differences between classic SEO and AI search.

From Pages to Identities

Traditional search engines rank pages.

AI systems rank identities.

Before an LLM evaluates the quality of a specific article, it assesses the source of that article. It looks for signals that indicate whether the website represents a known brand, a recognized expert, or a legitimate organization.

If the system can, without confidence, identify who owns the content or what the source is known for, the page starts at a disadvantage. Even well-written articles are often ignored when the identity behind them is weak or unclear.

How Entity Recognition Works

AI platforms maintain large internal knowledge graphs.

These graphs connect brands to industries, authors to topics, companies to products, and publications to subject areas. This information is collected from structured data, author profiles, Wikipedia and Wikidata entries, business listings, press coverage, and repeated citations across the web.

When these signals are consistent, the system learns that a specific entity is considered for a given topic. Once that association exists, future content from the same entity is retrieved and ranked more aggressively.

This process happens continuously and automatically.

Why Established Brands Dominate AI Answers

This is why large brands and well-known publishers appear so frequently in AI search results.

It is not only because their content is better.

It is because their identities are already trusted.

When an AI system processes a query, it often begins by looking for trusted entities that are associated with that topic. Pages from those entities are retrieved first. Smaller or unknown websites may never enter the candidate pool, even if their content is technically stronger.

Trust acts as a filter before ranking even begins.

The Role of Brand Mentions in LLM SEO

In LLM SEO, reputation signals are not limited to backlinks.

AI systems track how often a brand or domain is mentioned across authoritative sources. These mentions appear in news articles, research papers, documentation, industry blogs, and community discussions.

Even when no hyperlink is present, the mention still contributes to entity strength. Over time, repeated associations between a brand and a topic reinforce expertise.

This is why digital PR and brand exposure now directly influence AI rankings.

Mentions build memory.

Author Authority and Personal Entities

Authors are treated as entities as well.

When an author publishes consistently on a subject and their name appears across multiple trusted sources, the system learns that the author has subject-level expertise. Articles written by recognized authors are more likely to be retrieved and cited.

This is one reason why author bios, consistent bylines, and public profiles now matter far more than they did in traditional SEO.

In AI search, credibility follows the author as much as the domain.

How Entity Signals Affect Retrieval

Entity strength influences the retrieval stage, not just ranking.

When a query is processed, many systems restrict candidate documents to sources associated with trusted entities. Pages from unknown or weak entities may never be retrieved, regardless of how well they are optimized.

This creates a silent barrier.

Your page may be technically perfect and still never appear in AI answers because your entity is not considered reliable enough to include.

Building Entity Authority for Long-Term Visibility

Entity authority is built through consistency.

Publishing multiple in-depth articles on a focused topic.

Earning citations from reputable sources.

Keeping clear authorship and organization data.

Being mentioned across trusted publications.

Once an entity is established, visibility compounds.

New pages rank faster.

Citations become more frequent.

The brand becomes a default source for related queries.

In LLM SEO, this effect is stronger than any single backlink.

In AI search, pages win rankings.

But entities win retrieval.

If this tone works better (and it should), next we continue with:

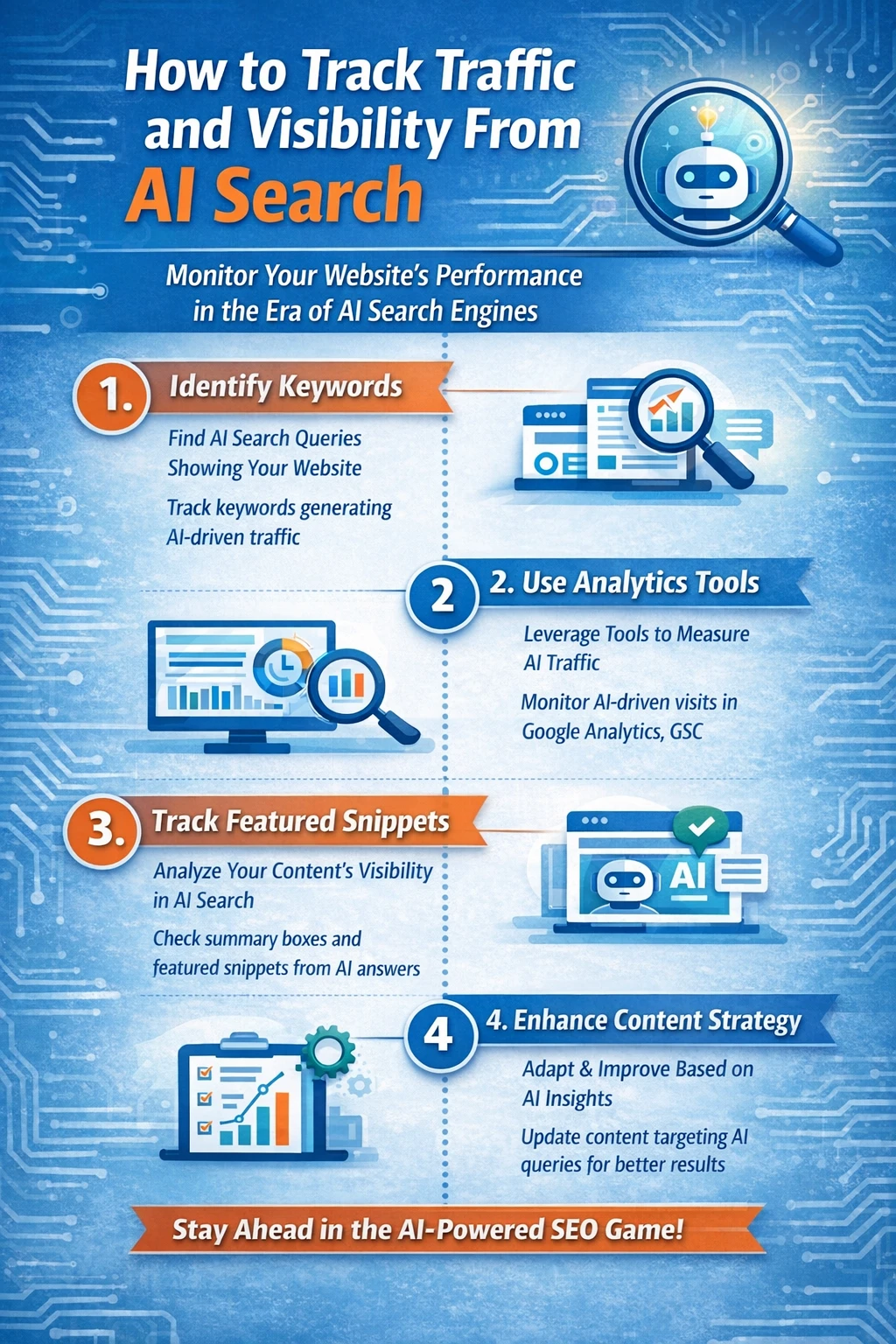

How to Track Traffic and Visibility From AI Search

Tracking traffic from AI search is one of the hardest problems in modern SEO.

Not because the data does not exist, but because most analytics systems were never designed to measure how AI platforms distribute information. Traditional analytics assumes a simple model: a user clicks a link, lands on a website, and the visit is recorded with a referrer. AI search breaks this model almost completely.

In many AI experiences, users never click on any source. They read the answer inside ChatGPT, Perplexity, or Google SGE and move on. When that happens, your content may influence the user, but without generating a single recorded session. From an analytics perspective, that influence is invisible.

Why AI Traffic Rarely Appears in Analytics

Most AI platforms do not pass consistent referrer data when users click through to a source. Some mask the referrer entirely. Others route traffic through internal redirect systems that strip attribution.

When visits do appear in GA4, they are often misclassified. Many show up as direct traffic. Others appear as unassigned sessions or generic referral traffic from unknown domains. Only a small fraction of AI-powered visits are correctly labeled as coming from an AI platform.

This means that standard organic traffic reports dramatically undercount the real impact of AI search.

In many cases, the majority of AI visibility produces zero measurable traffic.

The Difference Between Traffic and Influence

In LLM SEO, traffic and influence are no longer the same thing.

A traditional search result influences a user only after they click. An AI answer influences the user before any click happens. The user may trust the answer, follow the recommendation, or make a decision without ever visiting the source.

From a branding and authority perspective, this influence is often more valuable than a single visit. But from an analytics perspective, it leaves no trace.

This is why classic SEO metrics, such as sessions, clicks, and rankings no longer tell the full story.

How to Detect AI Traffic Indirectly

Although most AI exposure is invisible, some signals still appear.

Occasionally, services such as Perplexity, Copilot, or ChatGPT browse pass referral headers. These visits usually have distinctive patterns. They tend to be low-volume but high-quality, with longer session durations and lower bounce rates.

Over time, you may notice recurring domains sending small amounts of referral traffic. These often correspond to AI interfaces or AI-powered browsers.

This method does not measure influence at scale, but it helps confirm that your content is being used by AI systems.

Measuring AI Visibility Instead of Clicks

Because traffic data is unreliable, LLM SEO focuses on visibility rather than visits.

The primary question is whether your site is cited, referenced, or quoted in AI-generated answers.

The most accurate way to measure this today is manual testing. By running the same queries regularly in ChatGPT, Perplexity, Copilot, and Google SGE, you can track whether your domain appears as a source and how often it is selected.

Over time, patterns emerge. Certain pages are cited repeatedly. Certain queries consistently surface the same sources. This gives you a practical map of your AI search footprint.

This process is slow, but it reflects reality far better than analytics dashboards.

The Rise of AI Visibility and Citation Tracking Tools

A new category of SEO tools is emerging to solve this problem.

These platforms monitor large numbers of prompts across multiple AI systems and record which brands and URLs are cited. They track changes in citation frequency, compare visibility against competitors, and identify which pages are becoming default sources.

While still early, these tools represent the future of AI SEO measurement.

For the first time, SEOs can measure exposure even when no clicks occur.

In an AI-first world, this type of tracking becomes more important than keyword rankings.

Tracking Brand Presence Across AI Systems

Brand monitoring is another effective proxy.

Once an entity becomes trusted, AI systems tend to reuse it across many related queries. By tracking brand mentions, author mentions, and domain citations inside AI interfaces, you can estimate how widely your content is influencing answers.

This is especially valuable for measuring growth in authority.

A rising number of mentions usually signals that your entity recognition is strengthening.

The New Metrics That Matter in LLM SEO

In AI search, success is no longer determined solely by traffic.

The most meaningful indicators are how often your site is cited, how frequently your brand appears in answers, how many prompts surface your content, and whether the same pages are reused across multiple queries.

These metrics do not appear in GA4.

But they determine who controls visibility in AI search.

Why Early Measurement Creates a Competitive Advantage

Because most websites still ignore AI visibility, early adopters gain a major advantage.

They learn which formats are preferred, which topics trigger citations, and which pages become long-term sources. This data feeds directly into content strategy and topic selection.

Over time, these sites build a durable position inside AI retrieval systems that competitors struggle to displace.

In AI search, attribution is broken.

But influence is growing.

And the websites that learn how to measure it first will control visibility later.

Common Myths About LLM SEO

Because LLM SEO is new, it is surrounded by confusion.

Much of what is circulating today is either oversimplified, outdated, or based on assumptions carried over from traditional SEO. These myths slow adoption and lead many websites to optimize in the wrong direction.

Understanding what is not true is just as important as understanding what is.

Myth 1: “SEO Is Dead Because of AI”

This is the most common and the most damaging misconception.

AI search does not eliminate SEO. It depends on it.

Large language models do not crawl the web independently at scale. They rely heavily on search engine indexes, retrieval systems, and ranking pipelines that are built on classic SEO infrastructure. If a page is not discoverable, indexable, and credible in search engines, it will not appear in AI answers either.

What has changed is not the importance of SEO, but the output it produces.

Instead of ranking links, SEO now influences which sources power the generation of answers.

SEO has not disappeared.

It has become upstream of AI.

Myth 2: “Backlinks No Longer Matter”

Backlinks still matter.

They remain one of the strongest indicators of trust and authority within retrieval systems.

What has changed is how they are interpreted.

In classic SEO, links mainly influenced rankings. In LLM SEO, links influence whether a source is trusted enough to be retrieved and cited at all. They also feed into citation graphs that AI systems use to determine which sources are central within a topic.

However, links are no longer the only reputation signal.

Brand mentions, publisher citations, and entity associations now play an equally important role.

Backlinks are still necessary.

They are no longer sufficient on their own.

Myth 3: “Schema Alone Can Make You Rank in AI Search”

Structured data is powerful, but it is not a shortcut.

Schema helps AI systems understand your content. It boosts classification, disambiguation, and entity recognition. But it does not override authority.

A weak or untrusted site with perfect markup will rarely be cited.

In competitive queries, schema becomes a tie-breaker, not a primary ranking factor. It improves the chances that a strong page is selected. It does not rescue a weak one.

LLM SEO is still fundamentally trust-driven.

Markup helps the system read.

It does not make the system believe.

Myth 4: “LLMs Only Use Old Training Data”

This myth stems from a misconception of how modern AI systems work.

While training data provides general knowledge, most AI search platforms rely heavily on live retrieval for factual and technical queries. They pull documents from search indexes, rank them, and extract passages in real time.

This is why new pages often appear in AI answers within days of being published.

It is also why freshness matters more in LLM SEO than in classic SEO.

Training sets the baseline.

Retrieval determines visibility.

Myth 5: “Clicks No Longer Matter at All”

Clicks matter less than they used to.

But they are not irrelevant.

In many systems, user behavior still feeds feedback loops. Sources that consistently produce useful answers tend to be selected more often. Pages that lead to poor user experiences are gradually downgraded.

What has changed is that clicks are no longer the primary success metric.

Influence now often happens without a visit.

But engagement still shapes long-term trust.

Myth 6: “Only Big Brands Can Win in AI Search”

Large brands have an advantage, but they do not have a monopoly.

AI systems strongly favor topical authority.

A smaller site that publishes consistently, covers a subject deeply, and earns citations from reputable sources can become a dominant entity within a niche.

In many technical and emerging fields, the most cited AI sources are not large publishers.

They are specialized blogs, documentation sites, and individual experts.

The barrier is not size.

It is sustained expertise.

Myth 7: “LLM SEO Is Just Traditional SEO With a New Name”

This is partially true and mostly wrong.

LLM SEO builds on traditional SEO.

But the optimization target is different.

Instead of optimizing for rankings, you are optimizing for retrieval and citation. Instead of optimizing pages, you are optimizing entities. Instead of optimizing clicks, you are optimizing influence.

The fundamentals remain.

The objective has changed.

Why These Myths Persist

Most of these myths exist because AI search is improving faster than public documentation.

Platforms rarely explain how their systems work.

SEOs fill the gap with assumptions.

And early experiments are often generalized too quickly.

As a result, many sites either ignore LLM SEO entirely or pursue tactics that don’t matter.

Both approaches are costly.

The Future of SEO in an AI-First World

SEO is not disappearing:

It is changing at a structural level. Search engines are no longer only ranking documents. They are generating answers, synthesizing sources, and deciding which brands shape user decisions before a click ever happens. In this new model, visibility matters more than position.

The first major shift is from pages to entities:

AI systems progressively organize the web around brands, authors, companies, and concepts rather than URLs. Websites that fail to create a clear identity will struggle to appear in AI answers, even if their content is technically strong.

The second shift is from traffic to influence:

In AI search, many users never visit any website. They consume information inside the interface and move on. This means the most valuable outcome is no longer a click, but being the source the system trusts enough to quote.

Content strategy is changing with it:

Instead of chasing isolated keywords, successful sites will build dense topic clusters, publish reference-style guides, and maintain living documents that stay current as models update. Authority will come from coverage and consistency, not volume.

Technical SEO will remain the foundation:

AI systems still depend on crawling, indexing, and retrieval pipelines built on search engine infrastructure. Sites that ignore performance, accessibility, and structured data will never reach the retrieval stage, no matter how good their writing is.

Measurement will update next:

Rankings and sessions will no longer capture real impact. New metrics based on citations, entity visibility, and prompt coverage will become the primary indicators of success. Influence will replace traffic as the core KPI.

The biggest advantage belongs to early adopters:

AI search is still forming its retrieval preferences and default sources. Websites that gain authority now will be reused repeatedly across thousands of future queries. Late entrants will find it difficult to displace those in those positions.

In an AI-first world, SEO becomes upstream of decision-making:

The brands that shape answers will shape markets. And the sites that learn how to optimize for retrieval, trust, and entities today will control visibility tomorrow.

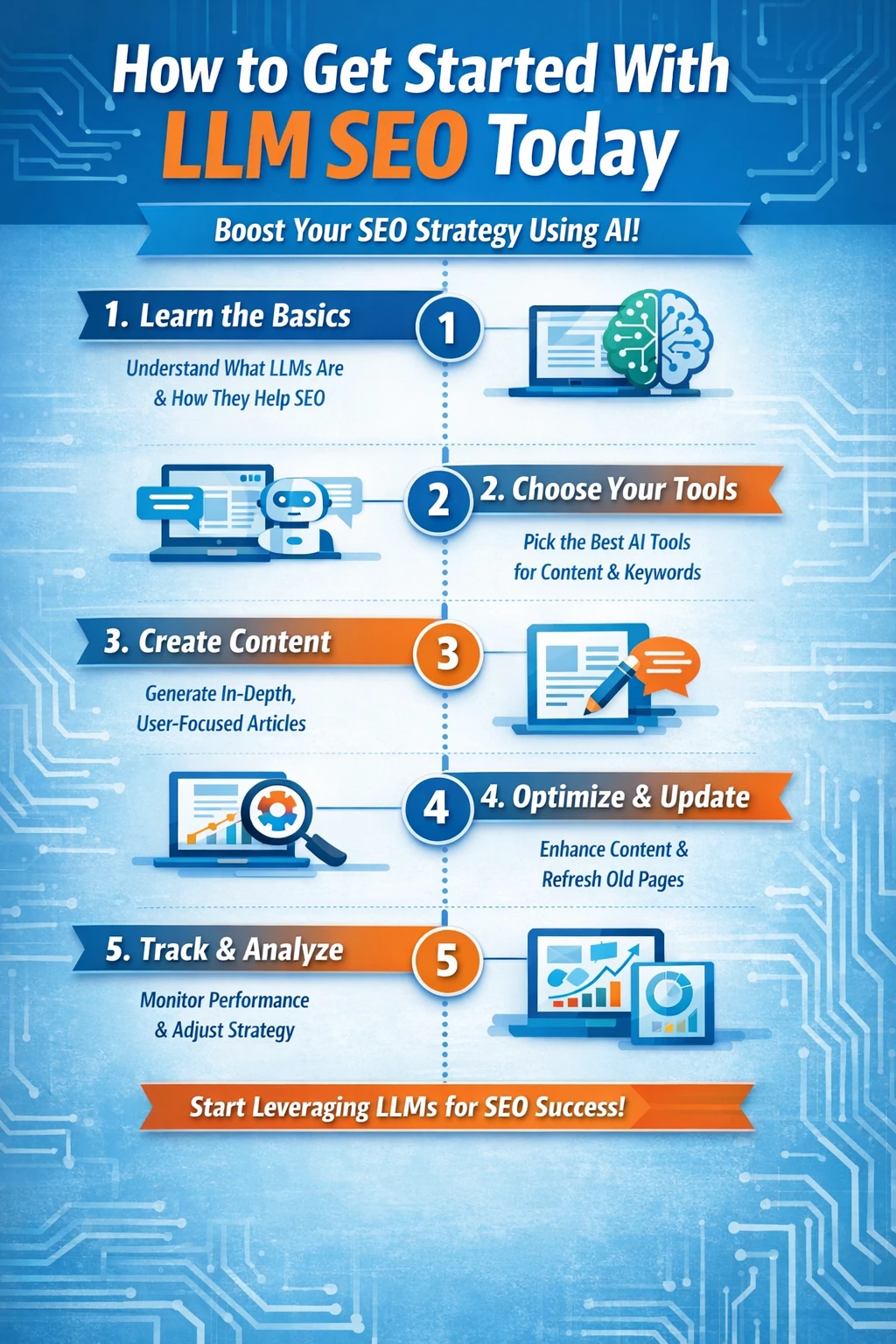

How to Get Started With LLM SEO Today

The best time to prepare for AI search was two years ago.

The second-best time is now.

LLM SEO is still early. Most websites have not adapted their content, structure, or authority strategy for AI retrieval systems. This creates a rare first-mover advantage for sites that act quickly.

Start with fundamentals:

Make sure your site is technically sound, properly indexed, and easy to crawl. Without strong technical SEO, nothing else in LLM SEO works. Retrieval always begins with discoverability.

Then build focused topical authority:

Choose one subject area and cover it in depth with related guides, definitions, and technical articles. AI systems reward consistency far more than volume. One strong cluster beats fifty disconnected posts.

Optimize how your content communicates:

Place answers early. Write clear definitions. Structure pages so each section can stand alone as a reusable answer. Think less about persuasion and more about precision.

Strengthen your identity:

Add clear authorship, consistent branding, and structured data that connects your content to actual entities. Publish under stable bylines. Build a reputation that computers can recognize.

Invest in visibility, not just rankings:

Track where your brand appears in AI answers. Monitor citations as well as mentions. Learn which formats and topics generate reuse. Traffic will follow influence, not the other way around.

Most importantly, treat LLM SEO as a system:

Authority, structure, freshness, reputation, and governance all work together. Sites that optimize only one layer rarely succeed. Sites that build the full system become default sources.

AI search is not a threat to SEO.

It is the next version.

And the websites that change now will define how search works for the next decade.

FAQs

LLM SEO is the process of optimizing your website so large language models like ChatGPT, Gemini, and Perplexity can discover your content, understand it correctly, and use it as a source in AI-generated answers. Instead of focusing only on ranking in Google, LLM SEO focuses on being selected and cited when AI systems generate responses. The objective is not just visibility in search results, but also visibility within AI-generated answers themselves.

Traditional SEO optimizes pages to rank higher in search results and attract clicks. LLM SEO optimizes content so it can be retrieved, trusted, and reused by AI systems. Instead of competing for rankings, you compete to become one of the few sources an AI model selects when generating an answer. Keywords still matter, but authority, entities, structure, and clarity matter far more.

No. LLM SEO does not replace traditional SEO.

AI search systems depend heavily on search engine indexes, crawling infrastructure, and ranking signals that come from classic SEO. If your site is not discoverable and credible in Google or Bing, it will not appear in AI answers either. LLM SEO builds on traditional SEO and extends it towards AI-driven retrieval and citation systems.

Modern AI search systems use a combination of training data and live retrieval. Training data provides general knowledge, but most factual and technical queries depend via real-time retrieval from search engine indexes, proprietary crawlers, and licensed publisher sources. When a user asks a question, the system retrieves relevant pages, ranks them, extracts passages, and generates an answer using those sources.

Websites are more likely to be cited when they demonstrate strong topical authority, clear structure, and high trust. AI systems prefer pages that answer questions directly, use consistent terminology, include strong authorship signals, and belong to recognized entities. Brand mentions, editorial citations, structured data, and fresh content all increase the probability of being selected as a source.

Schema markup does not directly guarantee citations, but it significantly improves how AI systems understand your content. Structured data helps classify pages, identify authors and organizations, validate freshness, and connect entities across the web. In competitive queries, the schema often becomes the deciding factor between two sources of equal authority.

llm.txt is a proposed standard that allows website owners to control how AI crawlers access and use their content. It does not improve rankings or increase visibility today. Its main purpose is governance, not optimization. However, adding llm.txt can future-proof your site and clarify whether your content can be used for training or retrieval as AI regulation and licensing evolve.

Yes.

LLM SEO strongly favors topical authority over brand size. Many AI systems cite niche blogs, documentation sites, and individual experts when they demonstrate deep, consistent expertise in a specific subject. A smaller site with focused coverage and strong citations can outperform large brands in technical and emerging topics.

Most AI traffic does not appear clearly in analytics because many users never click a source link. When traffic does appear, it is often misclassified as direct or unassigned. The best way to track AI impact today is to monitor citations, brand mentions, and visibility across AI platforms, rather than depending exclusively on GA4 sessions.

Yes. LLM SEO is becoming a core part of search strategy.

As AI answers replace more traditional search results, the websites that shape those answers will control visibility, brand perception, and demand generation. Sites that build authority early will become default sources that are reused across thousands of future queries.